Today, we are announcing updates to the Azure Maps Web SDK, which adds support for common spatial file formats, introduces a new data driven template framework for popups, includes several OGC services, and much more.

With as little as three lines of code this module makes it easy to integrate spatial data with the Azure Maps Web SDK. The robust features in this module allow developers to:

◉ Read and write common spatial data files to unlock great spatial data that already exists without having to manually convert between file types. Supported file formats include: KML, KMZ, GPX, GeoRSS, GML, GeoJSON, and CSV files containing columns with spatial information.

◉ Use new tools for reading and writing Well-Known Text (WKT). Well-Known Text is a standard way to represent spatial geometries as a string and is supported by most GIS systems.

◉ Connect to Open Geospatial Consortium (OGC) services and integrate with Azure Maps web SDK.

◉ Overlay Web Map Services (WMS) and Web Map Tile Services (WMTS) as layers on the map.

◉ Query data in a Web Feature Service (WFS).

◉ Overlay complex data sets that contain style information and have them render automatically using minimal code. For example, if your data aligns with the GitHub GeoJSON styling schema, many of these will automatically be used to customize how each shape is rendered.

◉ Leverage high-speed XML and delimited file reader and writer classes.

Try out these features in the sample gallery.

Spatial IO module

With as little as three lines of code this module makes it easy to integrate spatial data with the Azure Maps Web SDK. The robust features in this module allow developers to:

◉ Read and write common spatial data files to unlock great spatial data that already exists without having to manually convert between file types. Supported file formats include: KML, KMZ, GPX, GeoRSS, GML, GeoJSON, and CSV files containing columns with spatial information.

◉ Use new tools for reading and writing Well-Known Text (WKT). Well-Known Text is a standard way to represent spatial geometries as a string and is supported by most GIS systems.

◉ Connect to Open Geospatial Consortium (OGC) services and integrate with Azure Maps web SDK.

◉ Overlay Web Map Services (WMS) and Web Map Tile Services (WMTS) as layers on the map.

◉ Query data in a Web Feature Service (WFS).

◉ Overlay complex data sets that contain style information and have them render automatically using minimal code. For example, if your data aligns with the GitHub GeoJSON styling schema, many of these will automatically be used to customize how each shape is rendered.

◉ Leverage high-speed XML and delimited file reader and writer classes.

Try out these features in the sample gallery.

WMS overlay of world geological survey.

Popup templates

Popup templates make it easy to create data driven layouts for popups. Templates allow you to define how data should be rendered in a popup. In the simplest case, passing a JSON object of data into a popup template will generate a key value table of the properties in the object. A string with placeholders for properties can be used as a template. Additionally, details about individual properties can be specified to alter how they are rendered. For example, URLs can be displayed as a string, an image, a link to a web page or as a mail-to link.

A popup template displaying data using a template with multiple layouts.

Additional Web SDK enhancements

◉ Popup auto-anchor — The popup now automatically repositions itself to try and stay within the map view. Previously the popup always opened centered above the position it was anchored to. Now, if the position it is anchored to is near a corner or edge, the popup will adjust the direction it opens so that is stays within the map view. For example, if the anchored position is in the top right corner of the map, the popup would open down and to the left of the position.

◉ Drawing tools events and editing — The drawing tools module now exposes events and supports editing of shapes. This is great for triggering post draw scenarios, such as searching within the area the user just drew. Additionally, shapes also support being dragged as a whole. This is useful in several scenarios, such as copying and pasting a shape then dragging it to a new location.

◉ Style picker layout options — The style picker now has two layout options. The standard flyout of icons or a list view of all the styles.

Style picker icon layout.

Code sample gallery

The Azure Maps code sample gallery has grown to well over 200 hundred samples. Nearly every single sample was created as a response to a technical query we had from a developer using Azure Maps.

An Azure Maps Government Cloud sample gallery has also been created and contains all the same samples as the commercial cloud sample gallery, ported over to the government cloud.

Here are a few of the more recently added samples:

The Route along GeoJSON network sample loads a GeoJSON file of line data that represent a network of paths and calculates the shortest path between two points. Drag the pins around on the map to calculate a new path. The network can be any GeoJSON file containing a feature collection of linestrings, such as a transit network, maritime trade routes, or transmission line network.

Map showing shortest path between points along shipping routes.

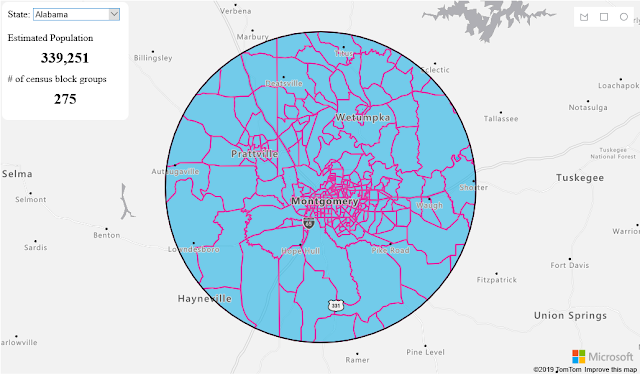

The Census group block analysis sample uses census block group data to estimate the population within an area drawn by the user. Not only does it take into consideration the population of each census block group, but also the amount of overlap they have with the drawn area as well.

Map showing aggregated population data for a drawn area.

The Get current weather at a location sample retrieves the current weather for anywhere the user clicks on the map and displays the details in a nicely formatted popup, complete with weather icon.

Map showing weather information for Paris.

Source: microsoft.com